Every spring and fall when I visit Yosemite, I look up at Royal Arches from the valley floor and trace the line on the 2,000-foot cliff. It sits right next to the granite exfoliation arches facing Half Dome—striking, unmistakable. For years I’ve imagined getting on this all-time classic. This May, at a moment when I didn’t know what came next in my life, I finally did.

The climb happened serendipitously. I had just met John a few days earlier on Tahoe trip through a friend. He was very capable and great to climb with. He was heading to Yosemite after and invited people to join him; I didn’t have a fixed schedule and I love Yosemite, so I joined him. After climbing another valley classic and checking the weather, we decided on Royal Arches just two days before. Sometimes the biggest days come together like that.

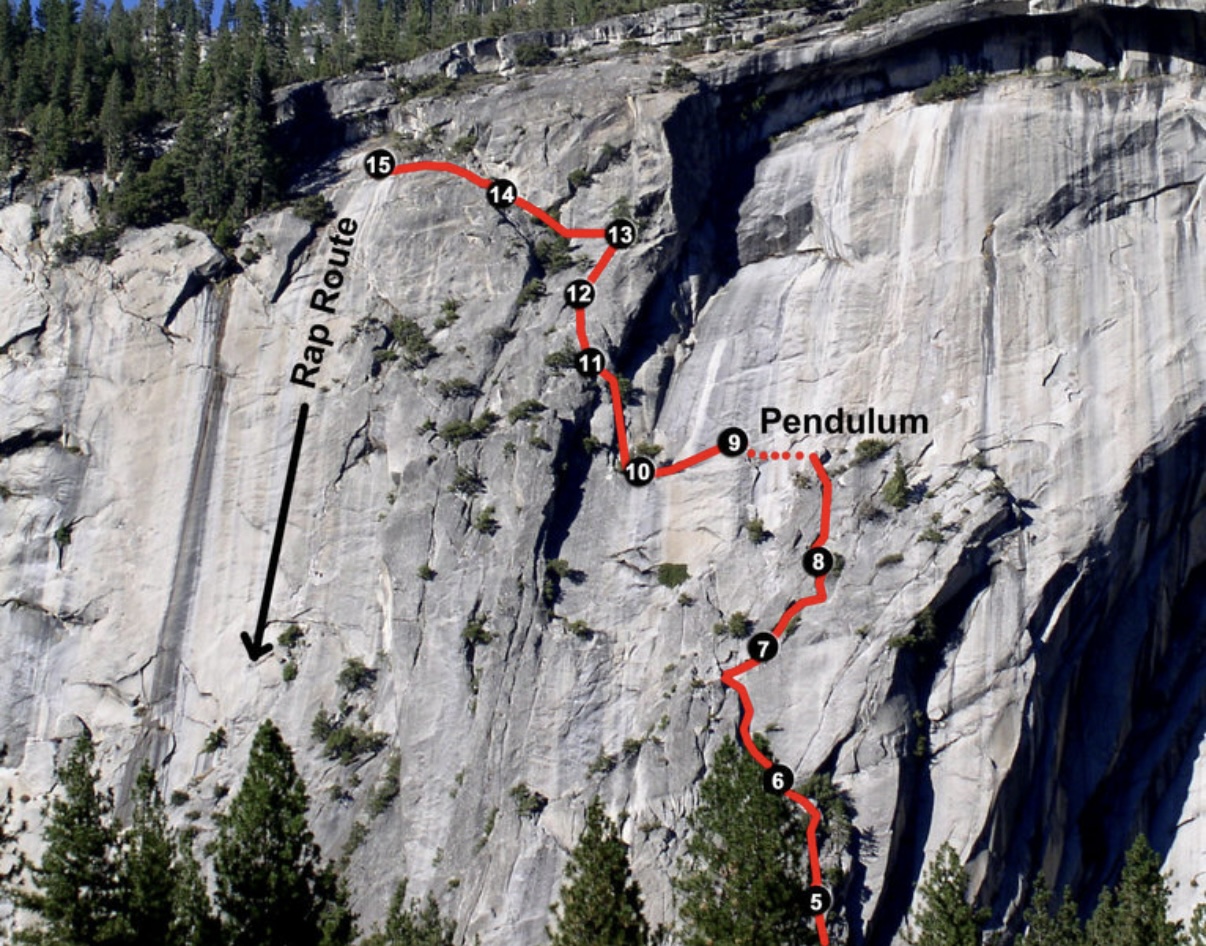

It was a 15-hour car-to-car day: 12 hours climbing, 2.5 hours rappelling. 350 meters of vertical gain across 15 pitches. I slept lightly the night before. A raccoon came into the tent and triggered my AirTag alarm. I was up before 5am anyway.

The first few pitches were enjoyable—chimney climbing, which I’d been nervous about since I don’t have much experience squeezing between giant rocks and pushing against each side with back, feet, and palms. It’s nothing like what’s in the climbing gyms. But it went fine. The morning was cool, and I led a few easy pitches. Mostly walking though, not the sustained climbing I crave. My mind wandered to what I’d been trying not to think about: my transition into research had been uncertain, and I might need a backup plan soon.

Because the climb was mostly easier than my grade, I wasn’t super focused. I spent energy worrying and rushing instead—about rappelling in the dark, about finding anchors I couldn’t see, about summer. Perched on a small granite platform with thousands of feet of nothing beneath me, reading the topo on my phone, I realized I need to get good at route-finding quickly—on Royal Arches and in my life. I felt confident about my skill, rope systems, and our teamwork, but I didn’t feel in control of our fate. Compared to my first Yosemite multi-pitch two years ago, I was more capable but less stoked—more worried because I know more about what can go wrong now. At least I wasn’t wishing I was somewhere else.

The turning point: The pendulum swing changed everything. Soon after lunch, we reached the base of the pendulum pitch. On my third attempt, I ran horizontally across the rock face, successfully reaching a far crimped on the left, and crossed to the other side. It was my first time running on a vertical rock wall. It demanded my full attention—and it was so fun. I finally felt peace afterward, and started noticing how beautiful the valley looked. The pendulum was the answer I needed in a way: when the terrain requires everything you have, there’s no room for worry. Other highlights followed—the airy traverse at the end of pitch 11, the pin scar climb, strenuous corner laybacks. John led all the hard pitches. I could not have done this climb without his knowledge and steady leading.

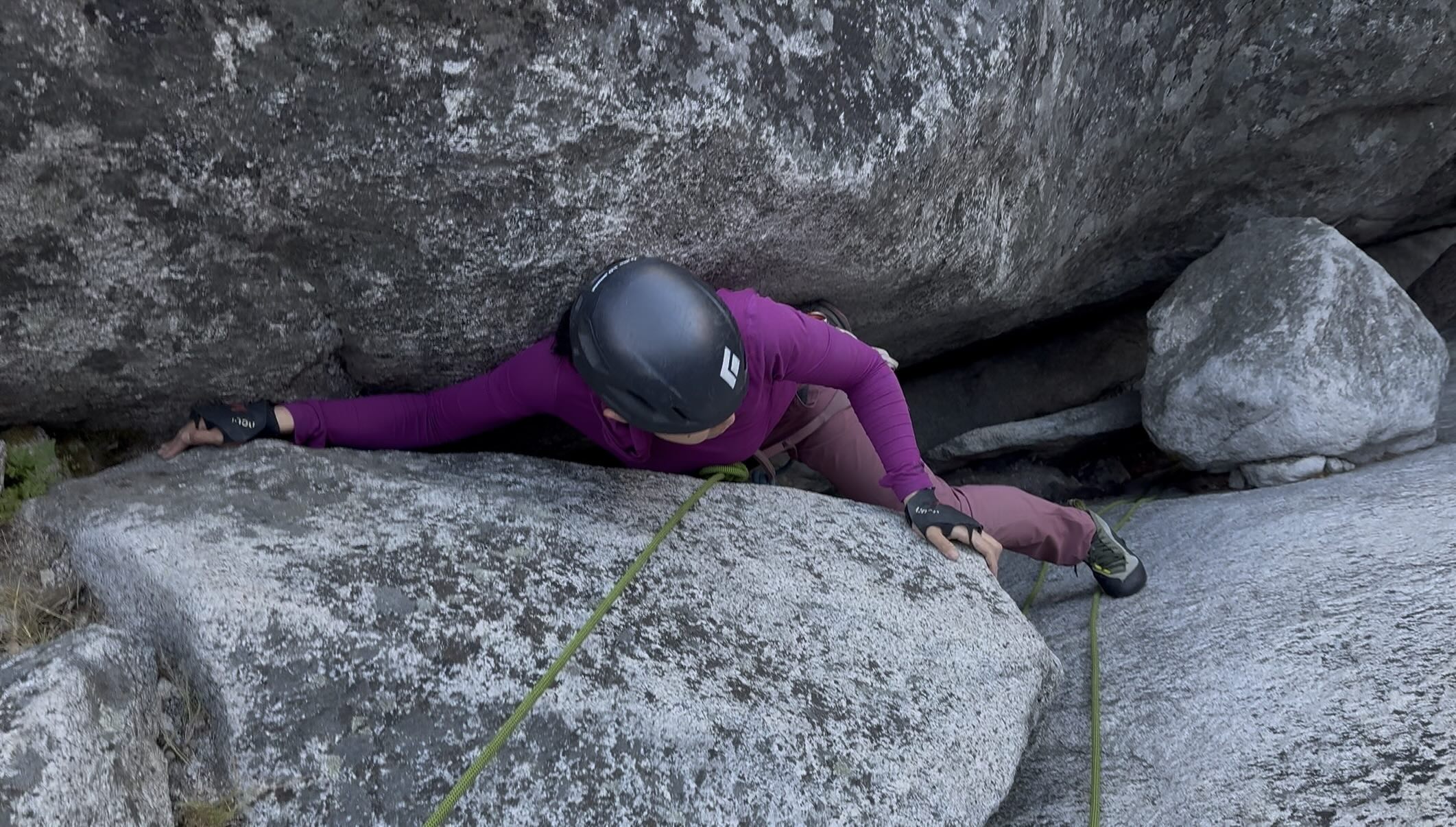

The other memorable pitch I led was a horizontal traverse—pitch 11—getting over a big bulge off the cliff. The sequence starts by climbing on top of the bulge, where the crack between it and the main cliff is the only place to put protection. The hard part: the bulge was taller than me when I started, so I had to climb it blind, unable to see what’s ahead. The ultimate test of solving problems as they come. None of the moves were difficult, but I felt shaky.

The traverse soon turned into a right-to-left horizontal hand-sized crack. I inched forward hand-over-hand, one hand jaming in the crack to keep myself on the rock, one reaching forward while pressing my feet hard against the rock so that the opposing pressure kept me stable. Nothing but air beneath my feet.

Like any trad lead, it was a balance between placing protection and preserving energy. More gear means a safer fall—if I peeled off while moving horizontally, I’d only swing back to my last piece instead of taking a massive pendulum. But placing gear in a horizontal crack wasn’t fast for me. I was hanging on the friction between one hand and the crack, feet not helping much, and back started to tire. I’m not the best endurance climber.

At one point, really straining, I looked down at my last piece and mentally calculated how bad the swing would be. Even though I trusted the gear would hold, the swing would slam me into the rock to my right. That thought made me tense up. I gritted my teeth and kept moving left.

There was a foot rail at next—it felt so thin that if I stayed static, I’d slip off. So I didn’t stay static. I kept going. Just as I was about to lose my balance, I suddenly noticed a tree branch right in front of me, growing out of the platform I needed to reach. I grabbed it, stabilized, scrambled onto solid ground, and let out a long exhale.

Looking back, the drama was probably mostly in my head. I psyched myself out. It was a beautiful hand crack, and I wish I had enjoyed it more. As is often the case, when I’m excited about a climb and focusing entirely on figuring out the route and moving well, I usually enjoy the it. But if I fixate on what could go wrong, I tense up, making the climb harder, and thus more likely to fall. Still a work-in-progress on my mental game.

The surprise: The thing I’d worried about all day turned out to be a gift. Rappelling in the dark was serene—just spots of campfires below, some stars above. I immediately understood the appeal of El Cap climbers spending nights on the wall. The Royal Arches rappel is well bolted, our grigri simul-rappel system worked beautifully, and the darkness that had loomed over me all day became something peaceful.

This was my first taste of a long ascent from Yosemite valley. Short approach, high-quality climbing, a full day among Yosemite granite. I’m grateful for John’s partnership and for a climb that taught me something: I can study the topo, but I can’t see every hold from the ground. At some point I have to start climbing and trust that I’ll solve problems as they come and not let the fear of uncertainty distract me from the present moment.

Lessons: Place protection during easy climbs, and double-check footing. Without that, I would have stepped into the void under some dead leaves and fallen more than 20 feet. Also: eat more. I was definitely undereating—next time, more protein bars, fewer fig bars, and lunch before noon.

]]>

We rappelled to reach the base of the summit block before our final push.

We rappelled to reach the base of the summit block before our final push.